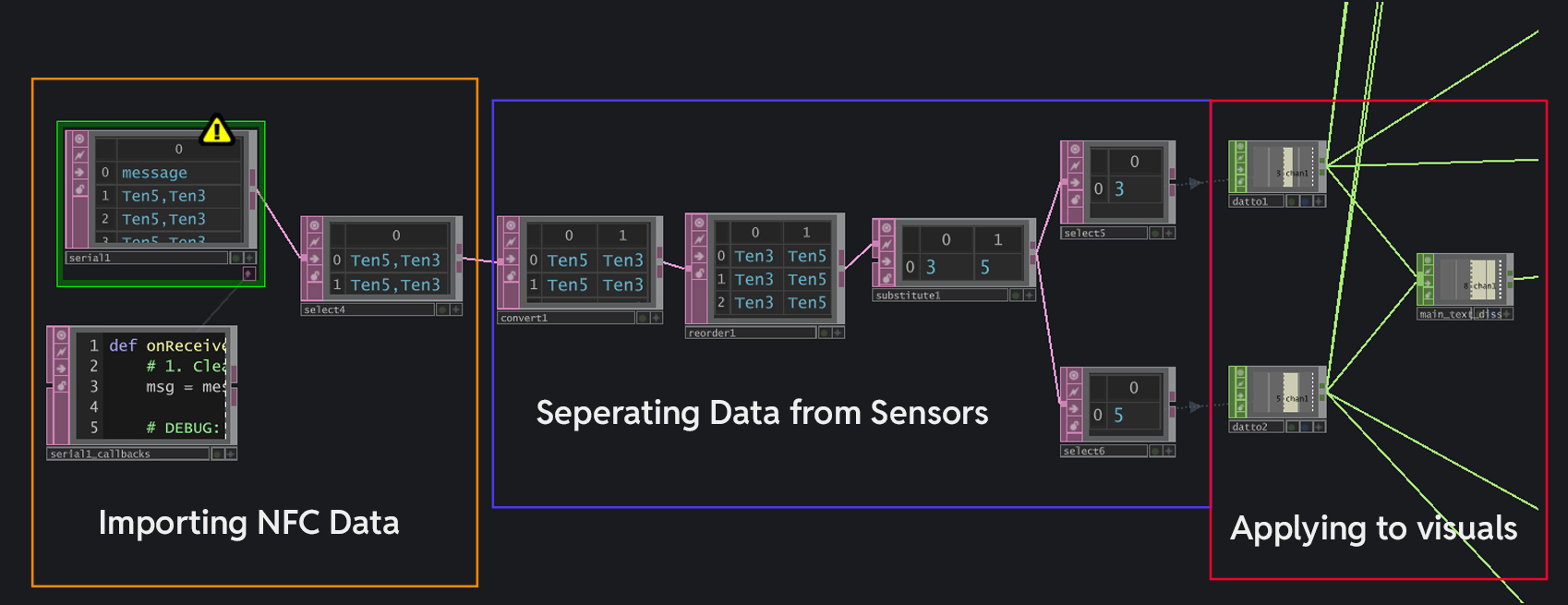

Importing NFC Reader's data into TouchDesigner

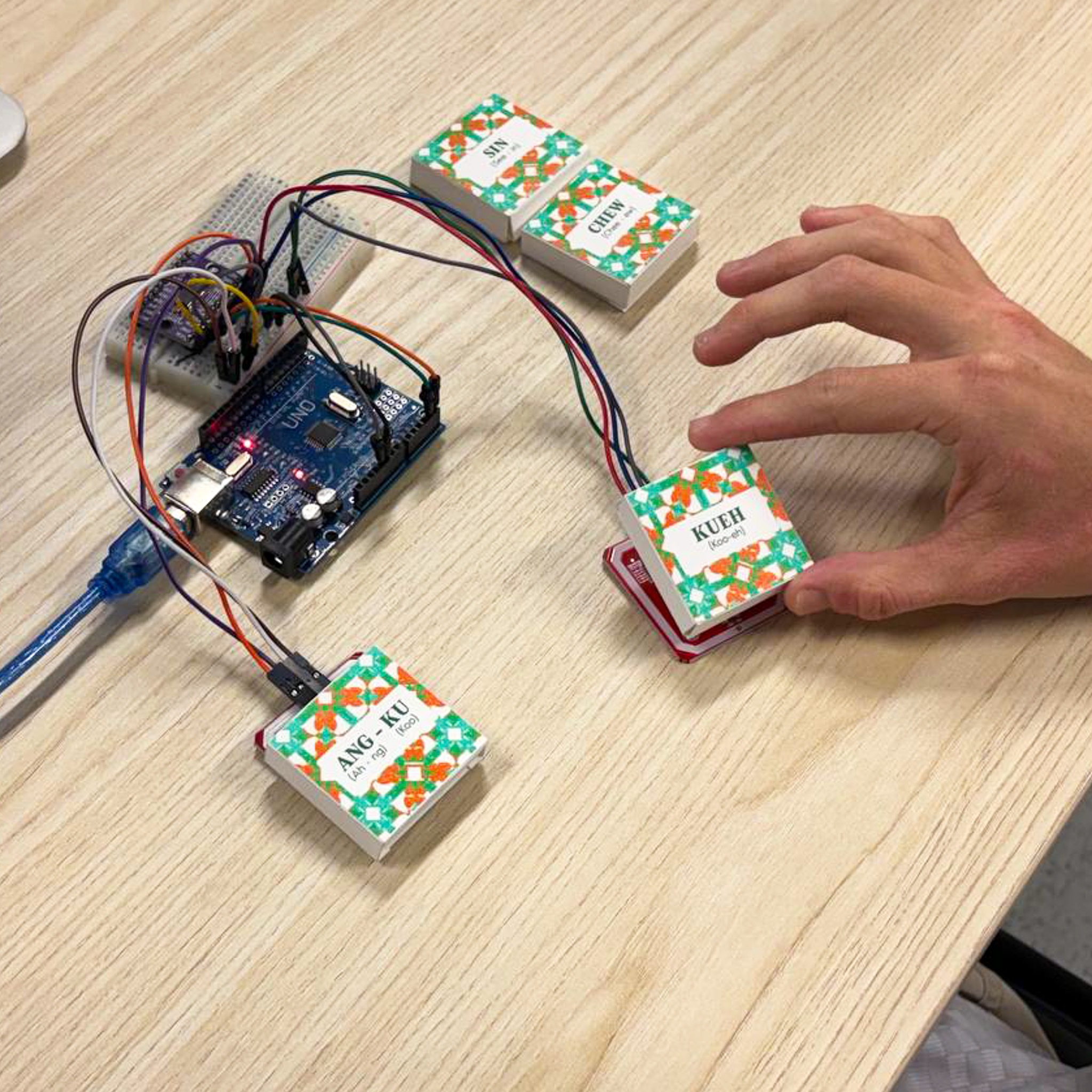

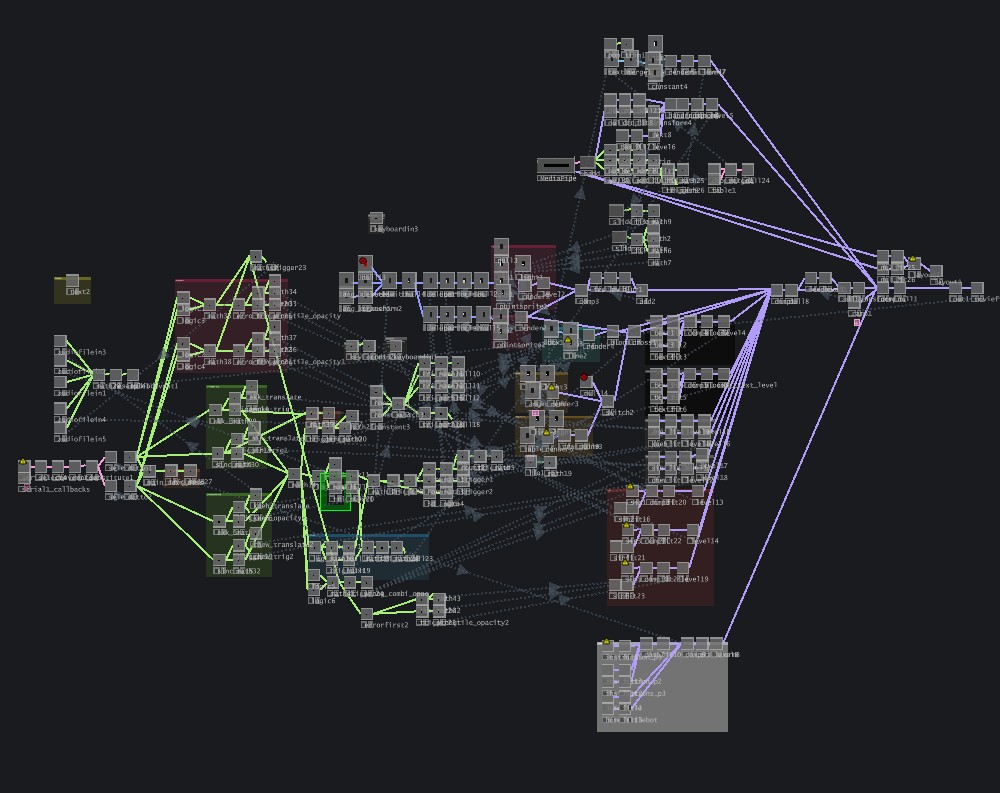

With the NFC readers working well with the NFC chips, I proceeded to integrate it into TD to have it change the visuals directly. The data received from the NFC readers was imported into TouchDesigner with a Serial DAT. The data came in as one string of data, which I separated and then converted to CHOP data to apply to the parameters of the visuals to trigger their appearances. This is the part where things start to get messy, as I have code to account for all the scenarios that can happen (I.E wrong and right inputs trigger different visuals and texts).

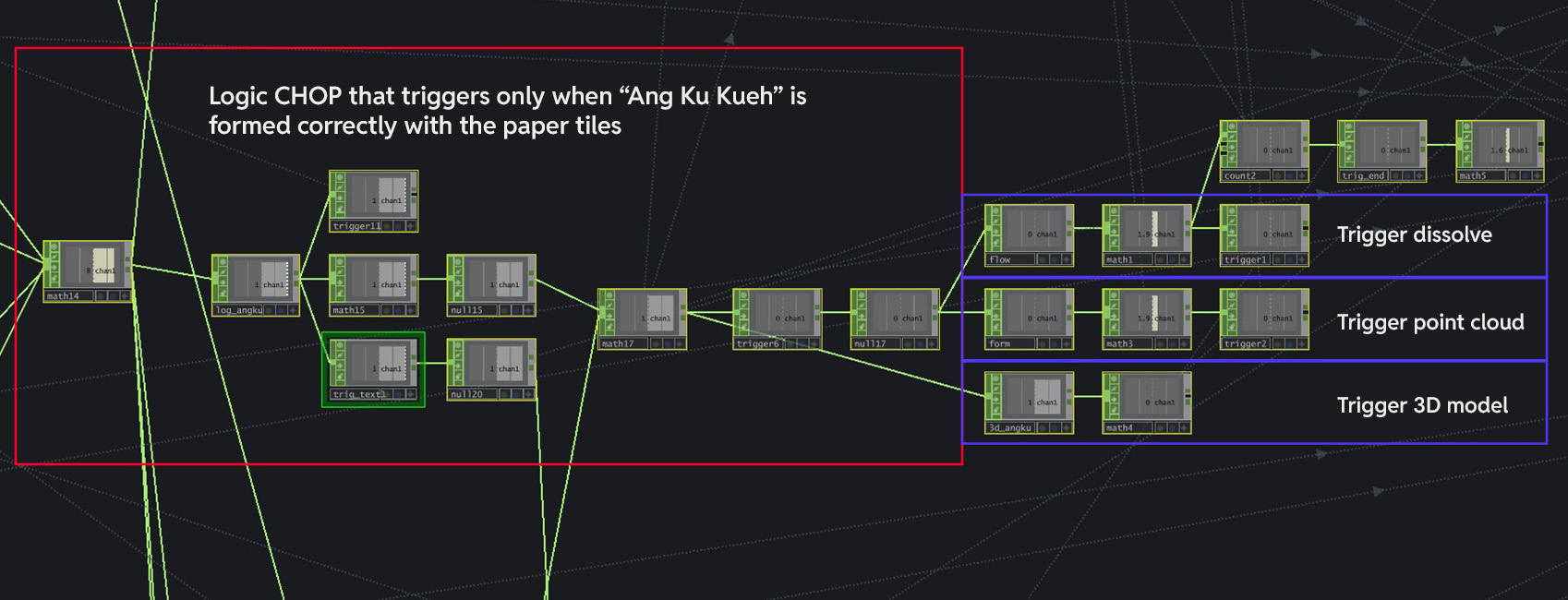

An example of the application of received NFC data

Above is a example of how I extracted out the NFC data to be applied to the visuals on TD. I started by adding a Logic CHOP that only triggers when the word "Ang Ku Kueh" is formed correctly with the paper tiles. This Logic CHOP is then connected to more CHOPS that remap its current values to normalized values that are able to be applied to the visuals directly (I.E trigger the visual opacity of Point Cloud or 3D Model to make them appear).

I had to code for the different interaction stages that occurs within the interface, such as when an

input is detected (the detected word appears) and for the playback of audio cues resulting from user

inputs. These codes might seem to be small and simple for one small outcome I want to achieve (like

triggering audio), but there were so many required outcomes and slowly it accumulated to this

monster web of nodes

(spoiler alert it gets way worse later, but i love it).

The current monster web of nodes

-

Current outcome of Prototype Two - Language Tiles

I tried to slot in the NFC readers into the paper platforms and realized that they do not read the NFC tag's data well. I realized that the issue here was the proximity of the NFC readers. The platform constrained them within a tight space, therefore resulting in a clash in their reading. Thus, this is something I need to keep in mind for iteration.

The failed paper platform

For my dissertation, I needed to conduct a user testing session for this prototype for me to write my critical journal. The user testing aims to uncover the impact of including auditory feedback and tactile interactions in the context of cultural learning. Three different variations of the prototype will be presented to participants.

Users press keys "1" and "2" to change the visuals. No verbal feedback that pronounces the paired word back to users.

Same keyboard input, but now with verbal pronunciation feedback so users know how the word is said.

Using the language tile input instead of keyboard with verbal feedback. This is to test how effective integrating tactile interactions are.

The prototypes were evaluated in the following conditions: (1) version one compared with version

two, and (2) version 2 compared with version 3. The first two versions, using only keyboard inputs,

tested the effectiveness of auditory feedback within the interface experience. The second and third

variations tested the impact of introducing novel and tactile interaction.

After testing,

users completed a google form which involves qualitative and quantitative questions, focusing on

inquiring on their insights towards their learning and engagement. There were a total of 7 users that took part in the testing.

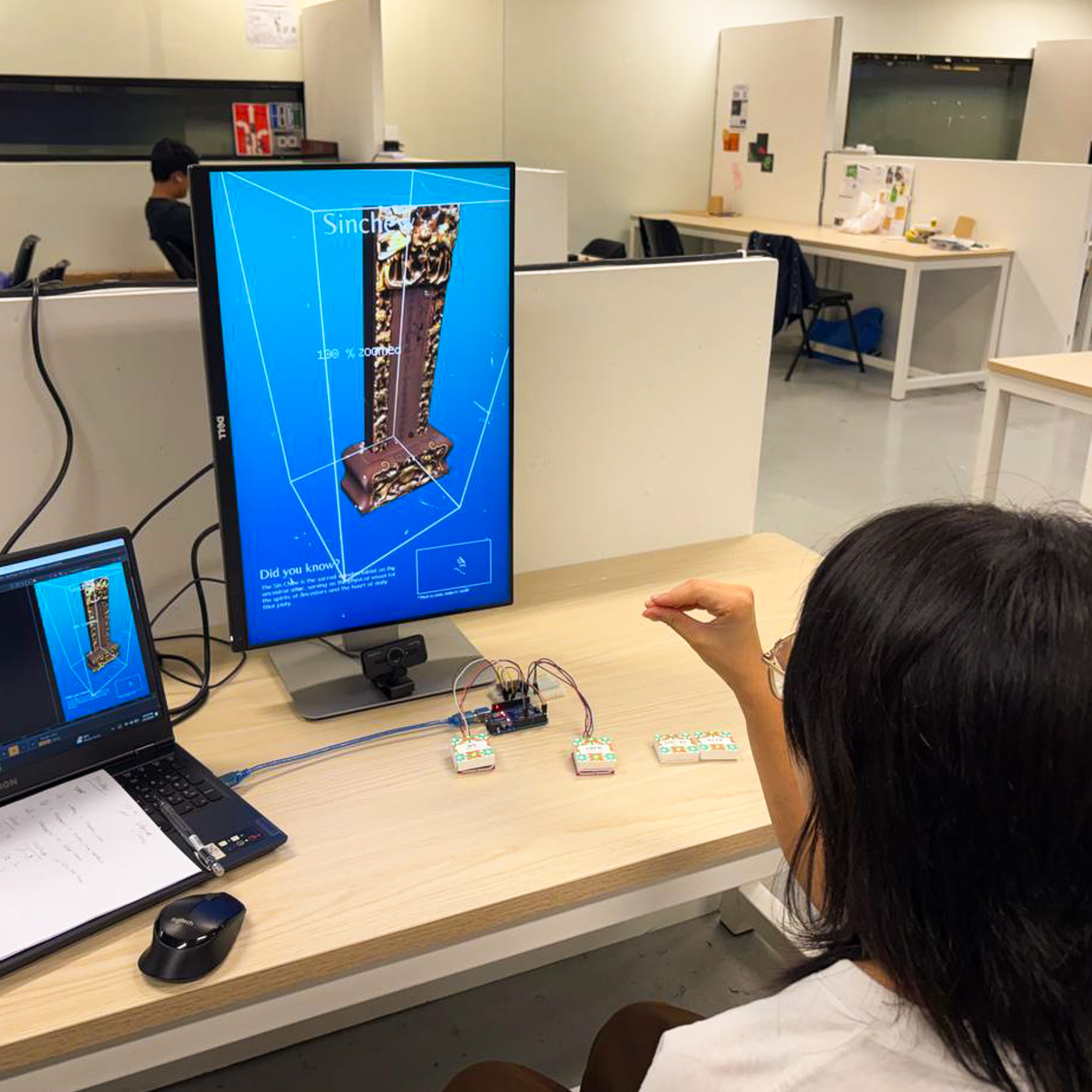

Participants testing the interface variations

The results from the post experience survey was largely positive.

Users rated variation (2) as more effective for learning Baba Malay, highlighting the value of verbal feedback. P1 specifically noted that this feedback helped them learn the correct pronunciation.

Users rated variation (3) as more effective, noting that tactile interaction sustained engagement. As P2 highlighted, "piecing the words together, along with audio, helps me bring the word [pronunciation] together."

Overall, variation (3) was perceived by participants to be the most engaging and informative. P3 stated that “combining words to create interactions was both highly intuitive and provided a very engaging and enjoyable experience.”

Participants suggested some improvements, mainly concerning interaction feedback improvements and expansion of content. Some participants suggested having the interface verbalize the artefact description so as to further facilitate their learning. P4 also wanted the ability to rotate the artefact 360-degrees, which was previously dismissed due to technical limitations. P5 suggested adding more Baba Malay terms, tiles and more information about the artefact to allow for further learning beyond the current set of words.

It was great to be able to finally put out the interface for user testing. While the results of the survey reflected the capabilities of the interface in terms of user engagement and learning, there was still much room for improvements as raised through the feedback and comments by the participants. Moving forward, I should seek to expand the learning effectiveness of the interface (I.E adding more tiles and information about the artefacts) and also other UI improvements, such as sound or visual cues to facilitate their interface experience.

With these results, I was finally able to continue writing my disseration's critical journal!